Short summary of work showing problem & impact.

Video Infrastructure Migration: Mainstreaming to Brightcove

I architected and built the end-to-end migration pipeline for international video accounts, transitioning from Mainstreaming to Brightcove. Designed automated pipelines to transfer legacy video entities and synchronize metadata across the CDN boundary. Upgraded core backend services and data pipelines to natively support Brightcove as the primary CDN provider — zero service downtime during transition.

GoAWSSNSSQSData MigrationCDNBrightcoveBackend Engineering

Wearable Data Integration & Gamification Engine

I designed and built the platform's first wearable health data integration, ingesting real-time step counts and metrics from Spike & Thryve into the AWS-based event pipeline. Independently developed the Steps-as-a-Goal feature from API contract to production — physical activity milestones directly trigger rewards in the contest engine. Built to handle burst ingest from irregular wearable sync schedules without backpressure on downstream services.

GoAWS LambdaDynamoDBSNSSQSEvent-DrivenData PipelinesGamification

Advanced CDN Transition & Metadata Enrichment

I led the global video migration from Akamai to Brightcove, extending the platform's event-driven architecture to propagate Brightcove CDN state changes (publish, update, delete) in real time across the service mesh via AWS SNS & SQS. Designed a scalable annotation capability to attach structured metadata to video resources, enabling interactive overlay features in client-side video players.

GoAWSSNSSQSData MigrationCDNBrightcoveEvent-DrivenArchitecture

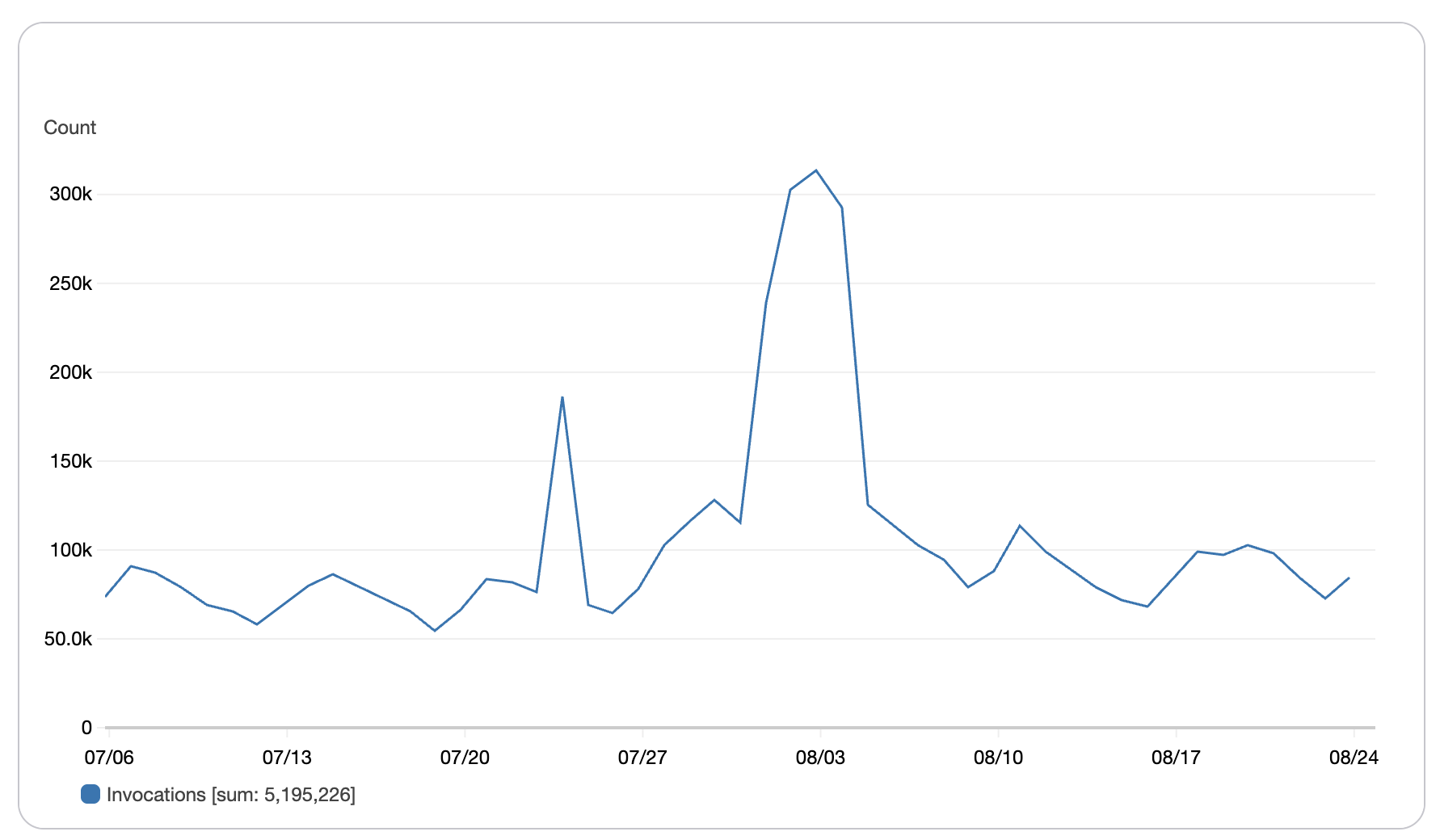

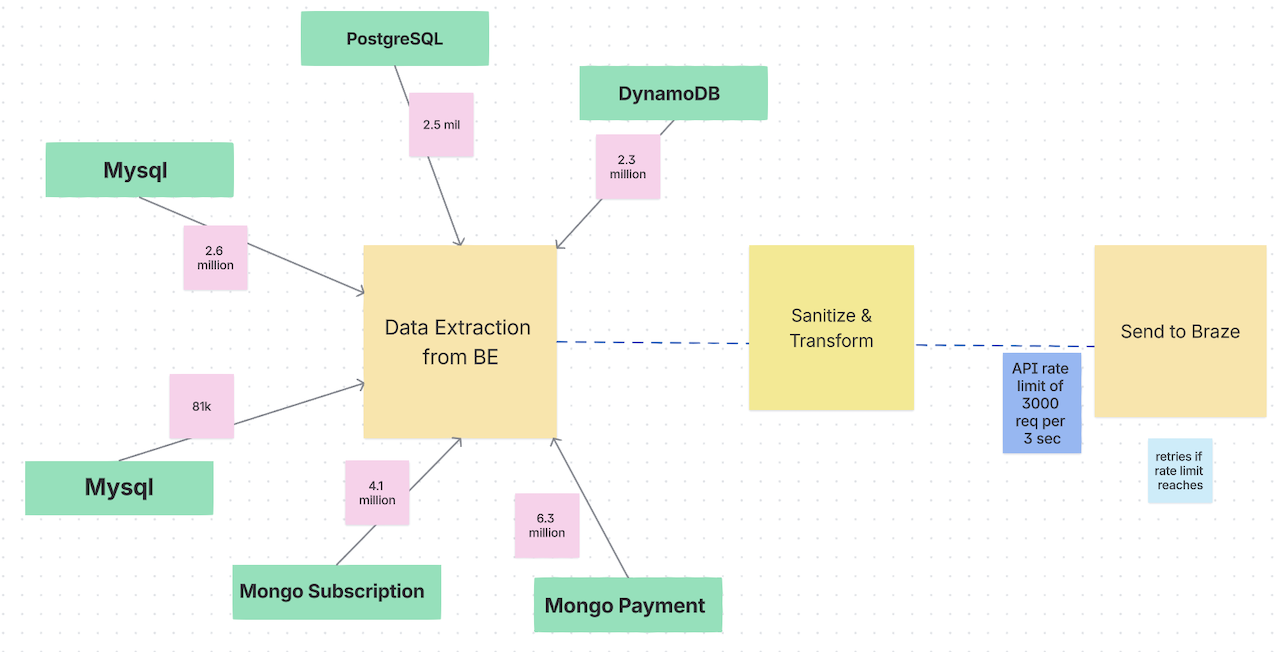

Real-time ETL Pipeline & CRM Migration

I built a high-throughput ETL pipeline in Go processing ~80k events/hour, migrating 2.8M+ customer records from a legacy CRM stack (Emarsys) to Braze. Replaced fragile nightly cron jobs with a real-time event-driven pipeline, cutting data latency from 24 hours to real time. Led the full migration including users, subscriptions, and historical data — zero downtime.

GoETLEvent-DrivenBrazeAWS LambdaDynamoDBMySQLMongoDB

Event-driven Data Ingestion & Search System

I designed the event-sourced architecture to capture high-throughput data changes with strong consistency. Built scalable REST APIs backed by Elasticsearch for low-latency full-text search, directly improving user experience for content discovery.

Event SourcingElasticsearchKotlinJavaREST APIMicroservicesArchitecture

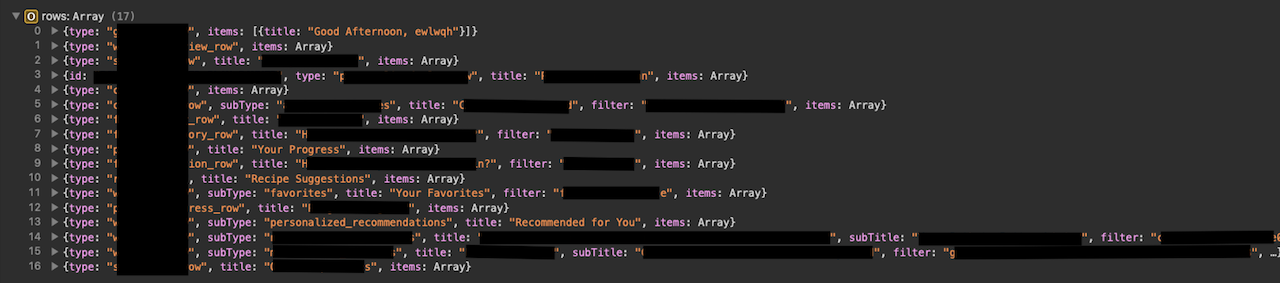

Content Recommendation Service

I built the platform's personalized recommendation engine using AWS Personalize, including custom filter APIs and a Redis caching layer. Optimized response times through data restructuring and caching strategies, improving content discovery and user engagement metrics.

GoAWS PersonalizeRedisREST APIMicroservicesCachingServerless

Platform Data Migration & Multi-Locale Support

I led the migration of 100K+ users, content items, subscriptions, and historical records from an acquired platform to the current system — zero data loss, minimal downtime. Implemented multi-locale backend support to ensure a seamless experience for global users across markets.

GoData MigrationMulti-localeMySQLData EngineeringArchitecture

AI-powered Healthy Meal Scanner

I designed and built the platform's first AI-powered food analysis feature — a fully serverless Go pipeline on AWS Lambda, DynamoDB, and S3 that identifies food from images and returns personalized nutritional guidance. Applied prompt engineering and context design to improve prediction accuracy for diverse meal types.

GoAWS LambdaDynamoDBS3AIServerlessPrompt Engineering

Unified API Gateway

I consolidated 12 downstream service calls into a single client endpoint using AWS Lambda and Go goroutines for concurrent request aggregation. Eliminated multi-version API maintenance, reduced client-side complexity, and improved performance for Web, iOS, and Android by standardizing DTO contracts across services.

GoAWS LambdaServerlessAPI GatewayGoroutinesSystem Design

DTO Consistency Framework

I established standardized DTO contracts across 5+ services, enforcing modular, row-based API responses. Decoupled client DTOs from backend domain models, eliminating the need for multi-version API maintenance (v1/v2/v3) and reducing client integration time across teams.

GoAPI ContractsArchitectureMicroservicesSystem Design